Sound in VR/AR

Share this step

The activities this week are all designed to help us consider the key role that future technology such as robots and virtual reality will play in all our lives.

We’ve already discovered how the next generation of medicine and healthcare will be shaped by virtual and augmented reality (VR/AR) applications. But now let’s think about another key aspect of any VR/AR environment – sound!

Without sound the virtual environment is wholly unrealistic (even worse than watching TV with the sound switched off, when you’re used to movie surround sound effects). Getting the sound right in VR means that users will feel fully immersed in the virtual environment. To ensure full immersion in VR systems, the spatial sounds need to match the spatial characteristics of the visuals – so if you see a car moving away from you in the VR environment, you will also expect to hear the car moving away from you. If it doesn’t, the whole impression of being in virtual reality is immediately lost.

One way to recreate an immersive sound field is to use lots of loudspeakers placed all around the listener’s head. In the previous step we saw how a large array of 50 loudspeakers (such as the one we have in the AudioLab at the University of York) can be used to reproduce complex sound scenes with sound sources located all around the listener. Recreating a sound scene over multiple loudspeakers is achieved by extending the techniques used in stereo recording and playback.

Stereo sound

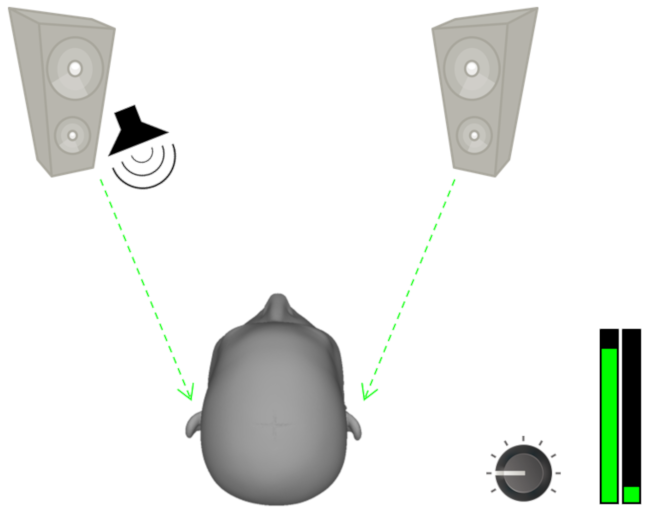

If we have two loudspeakers (stereo), we can move the perceived position of a sound source anywhere along the horizontal plane between the two loudspeakers. We can ‘pan’ the sound to the left side by increasing the amplitude level of the left loudspeaker and lowering the amplitude of the right loudspeaker.

Figure 1: Panning sound to the left by increasing the amplitude of the left loudspeaker.

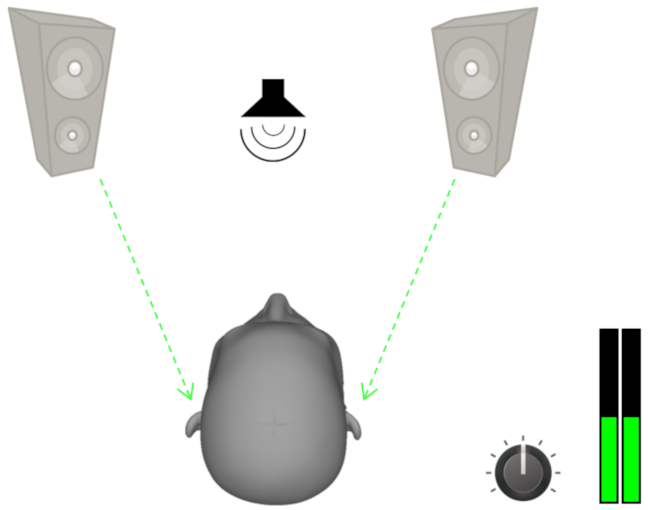

If the sound is played at the same amplitude level through both loudspeakers it will be heard as if coming from directly in between the two.

Figure 2: Panning sound to the left by increasing the amplitude of the left loudspeaker.

This technique of ‘amplitude panning’ to move sound sources between loudspeakers can be scaled up and used across an array of multiple loudspeakers to reproduce three dimensional surround sound.

But of course, not many people have access to a large number of loudspeakers arranged in a sphere which can produce enveloping surround sound. Most VR/AR systems designed for personal/home use rely on headphones to deliver surround sound to the user.

So – how do we go about delivering a 360 degree immersive sound scene over a pair of headphones?

Spatial sound

Take a moment to close your eyes and listen very carefully to the sounds all around you. (If you’re somewhere really quiet then you might want to try this exercise somewhere with more noise.) What can you hear? Where are those sounds coming from? What can you hear in front of you? What can you hear behind you? What about above you, below you, or to each side? Try rotating your head – do the sounds change?

Even with our eyes closed, our hearing system is very well evolved to allow us to locate sounds in space around us – we call this sound localisation. But how does it work?

To localise sounds in space we rely on ‘binaural cues’. Our brain takes ‘cues’, information about the level, timing and overall tone of the sound arriving at our left ear, and compares it with the sound arriving at our right ear. Differences between the sounds in each ear help us to work out where the sound is placed relative to our own position.

In How Your Ear Changes Sound we discovered how sounds are filtered by the outer ear, mainly the pinna. Think about a sound placed to your right, slightly above your head. The acoustic wave that reaches your ears from this sound has travelled directly to your right ear, but has had to travel around your head to reach your left ear. The shape of your head actually filters the sound, meaning that some frequencies are dampened and the overall tone is altered – these are spectral cues.

There are two more types of binaural cue your brain can use:

- The interaural level difference (ILD) – in our example the sound has travelled further to get to your left ear, so it’s quieter because it’s lost more energy on the way

- The interaural time difference (ITD) – in our example the sound reaches your right ear a fraction of a millisecond before it reaches your left ear.

Figure 3: Illustration of the time and level differences at each ear

All of these binaural localisation cues can be captured by measuring a Head Related Transfer Function (HRTF). We can then mix this HRTF with a raw sound source to give the listener an impression of the sound placed anywhere in space around their head. We actually need to use two HRTFs – a binaural pair – one for the right ear and one for the left ear or the illusion doesn’t work.

Try our spatial sound over headphones

To illustrate this, try listening to these audio clips (do make sure you are using reasonable quality headphones and that you have them on the right way round)

First listen to this sound: sound of shaker above and to your right hand side.

In this first audio clip you can hear the sound of a seed shaker mixed with the relevant HRTFs to make it sound as if it’s to your right and slightly above you.

Next listen to this sound: sound of shaker below and to your left hand side.

In that audio clip, the seed shaker is mixed with different HRTFs so that it sounds like it’s to your left and slightly below your shoulder.

And our last clip: shaker sound moving around your head.

Here, we have changed the HRTFs during the sound so that the seed shaker seems to move from top right to bottom left around the back of your head to illustrate how binaural audio can be used to place sounds all around the listener.

Do these sounds move around your head? Does it work for you?

If not, it might be that your head is quite different in shape and size to the ‘average’ head that was used to produce the HRTFs we used here. (The HRTFs we used are made freely available by the Department of Medical Physics and Acoustics at Carl-von-Ossietzky University, Oldenburg, Germany)

Figure 4: A small sample of lots of different shaped listener’s heads

The picture shows just a small sample of some of the different heads that we’ve been scanning in the University of York AudioLab. As you can imagine there’s a huge variety in the physiology of different listener’s heads. In the near future it will be possible to have your own head scanned, and walk away with your very own personal HRTF, meaning that binaural surround sound will work really well for you in your VR/AR system.

Find out more

Try watching and listening to these binaural audio techniques used to recreate the sounds you might hear if you go for a haircut in a barber’s shop

This is an additional video, hosted on YouTube.

Find out more about how binaural sound is being further developed by researchers at the BBC in this clip from the BBC Click programme

This is an additional video, hosted on YouTube.

Try our VR singing experience Sing From Your Seat developed by researchers at the University of York AudioLab. Have fun!

Over to you

We’d love to hear more about your thoughts on spatial sound – please comment below in answer to these questions:

-

Did the binaural sound files in this article work for you?

-

When the technology becomes available, would you be keen to have your own personalised HRTF?

-

What do you think might be the benefits or drawbacks of using spatial sound in films, video games or other experiences?

Share this

Engineering the Future: Creating the Amazing

Engineering the Future: Creating the Amazing

Reach your personal and professional goals

Unlock access to hundreds of expert online courses and degrees from top universities and educators to gain accredited qualifications and professional CV-building certificates.

Join over 18 million learners to launch, switch or build upon your career, all at your own pace, across a wide range of topic areas.

Register to receive updates

-

Create an account to receive our newsletter, course recommendations and promotions.

Register for free