Interaction Techniques in VR

Share this step

Let’s explore some fundamental interaction concepts for VR.

Introduction

The immersion in a virtual world is enhanced if the user can interact with it, preferably in natural ways. Years of research have produced a myriad of interaction techniques for VR and these techniques support one of the three main types of action:

- Selection

- Manipulation

- Locomotion

Let’s look at some examples which you may encounter in your journeys into virtual worlds.

Selection

In its simplest form, selection involves telling the system which object or UI element the user wishes to interact with. Once selection is confirmed by user, the selected entity becomes the focus of further interaction inputs by the user. Selection can be performed using controller input, gestures or gaze.

Controller Input (Laser-pointer or Ray-cast)

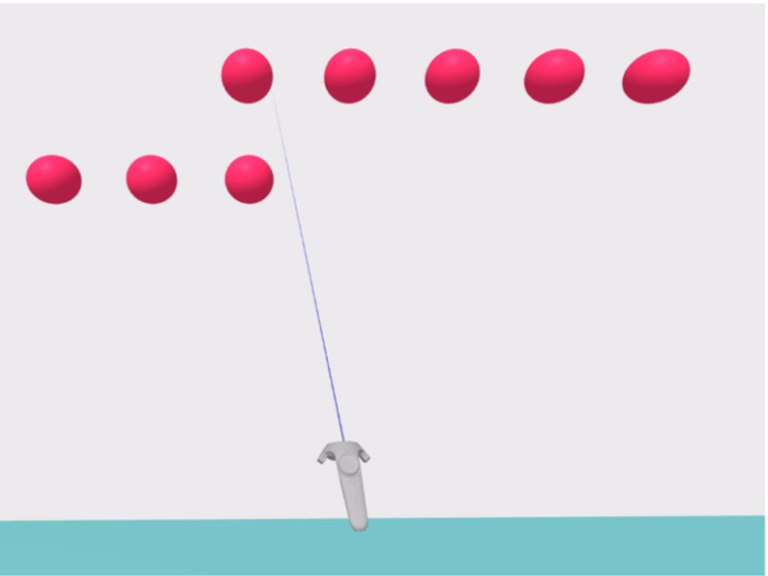

This is the most familiar selection technique. A virtual laser-pointer is fired from the controller (see Figure 1). The user changes the controller orientation to re-orient the laser-beam and intersect the pointer beam with a desired object. The two main challenges with ray-casting are accidental selections and occlusion. Accidental selections occur due to intersection with another object while the pointer is moving towards the target object. Occlusion can occur when the target object is hidden behind another object. There is no common solution to these challenges. The designer has to decide how to handle these challenges. Even then, ray-cast is one of the most commonly used input methods due to simple implementation and low-cost.

Figure 1: Laser pointer selection

Figure 1: Laser pointer selection

Gestures

VR headsets which can capture and interpret actual hand gestures are becoming more common. Using hand-held passive props, active 6DoF controllers and camera capture, a wide range of gestures can be identified by the system. Newer devices, like the Oculus Quest, support native hand-tracking. Common interaction techniques such as laser pointers from the hands (see Figure 2) coupled with a pinch gesture can be used to confirm selection of an object. Nearby objects can be grabbed naturally if they are close enough.

Figure 2: Gesture based selection. ‘Laser-pointers’ for far objects and ‘Grab’ for nearby objects

Figure 2: Gesture based selection. ‘Laser-pointers’ for far objects and ‘Grab’ for nearby objects

Gaze

Gaze-based selection involves the head-pose or actual eye-gaze. Gyroscopes inside VR headsets can easily track head-pose. Actual eye-gaze requires costlier and complex eye-tracking hardware. The selection interface element is a reticule (mainly for head-pose) or a cursor displayed in the virtual world. The position of the element is updated based on head-pose or gaze of the user. The user starts the selection process by looking at the object directly (see Figure 3). The selection can be confirmed by an external controller input or by dwelling on the target for a fixed period of time.

Figure 3: Gaze base selection pointer with cursor

Figure 3: Gaze base selection pointer with cursor

Manipulation

This set of interaction actions occurs once an object is selected by the user.

S/R/T

Once an entity is selected by a user, they may want to manipulate it viz: resize it, re-orient it or move it. These fundamental actions can scale, rotate, or translate the selected object. A variety of interaction techniques allow each individual action to be performed. The choice of technique depends on the available input controller’s capability. These can range from simple controllers like a scroll-wheel or a touch-pad to full bimanual gestures like pinch and stretch.

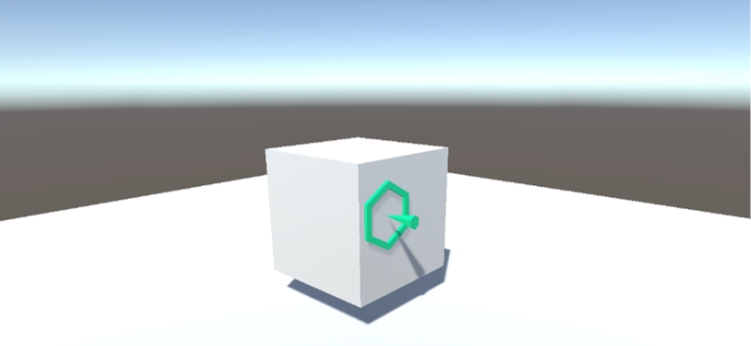

Affordance-driven manipulation

The user may also want to operate the entity based on the affordance it offers, e.g. push a virtual button or crank a lever. Care must be taken by the virtual world creator to expose the affordance in unambiguous ways and support the input capabilities of the hardware used by the user.

Locomotion

This set of interaction techniques enable user movement within the virtual world. They re-position or re-orient the user in the virtual world.

Locomotion and Vection

The most important challenge of locomotion interaction is to reduce or eliminate vection. When a user performs locomotion in virtual world while being still in the real world, they experience a feeling of disorientation or vection. The user’s visual system sees movement while the body’s balance apparatus indicates lack of movement. This manifests as visual-vestibular conflict and a common cause of nausea and simulator sickness. Locomotion interaction techniques manage vection to varying degrees.

On rails

The user’s movement is controlled by the system in an ‘on rails’ fashion, e.g. a roller coaster simulator. This can result in vection very easily and needs to be controlled carefully.

Gaze-directed steering

On rails movement can be extended by allowing the user to look in the direction they wish to move. The movement can then either be at a constant velocity or triggered by another input.

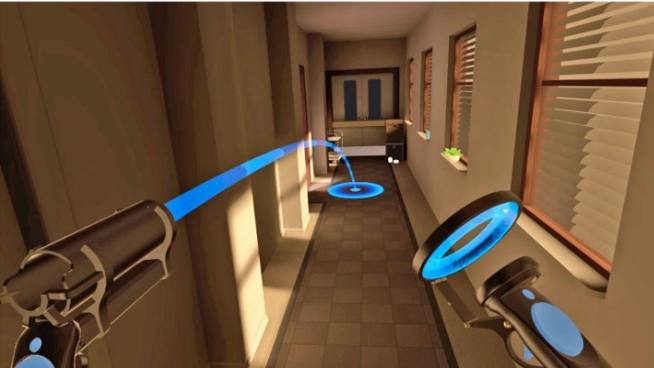

Teleport

Users can use a gaze-based or controller-based raycast to select an area of the environment they want to move or ‘teleport’ to. This is often coupled with a rotation element, so that when selecting an area to teleport to, the user can also specify the direction they want to face when they teleport (Figure 4).

Figure 4: Teleportation based locomotion

Figure 4: Teleportation based locomotion

Real movement

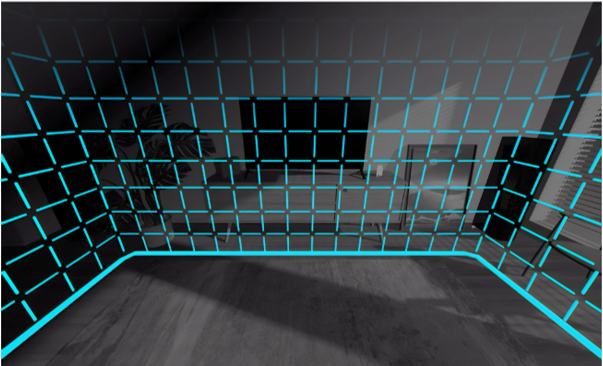

While using VR headsets like the Oculus Quest, users can simply walk around the virtual world. This reduces vection. However, walking around can increase the risk of collision with real-world objects. Manufacturers provide some form of proximity alert system to protect users in this scenario (see Figure 5). This alleviates the collision risk in the absence of an omnidirectional treadmill.

Figure 5: Oculus Quest’s guardian protection system

Figure 5: Oculus Quest’s guardian protection system

Design considerations for interactivity

Modern day PCs and tablet devices have a standardized set of inputs (keyboard, mouse, touch-screen). They also implement a standardized set of interactions (e.g. Ctrl+C is recognizable as the copy command). In contrast, VR inputs and interactions are not standardized. The creator of a virtual world has to makes critical decisions on how accessible their world is going to be to the user. These decisions are based on the choice of hardware and the interactions necessary for the VR experience.

Share this

Reach your personal and professional goals

Unlock access to hundreds of expert online courses and degrees from top universities and educators to gain accredited qualifications and professional CV-building certificates.

Join over 18 million learners to launch, switch or build upon your career, all at your own pace, across a wide range of topic areas.

Register to receive updates

-

Create an account to receive our newsletter, course recommendations and promotions.

Register for free