Importance of Evidence Synthesis in Disability Research

What is an evidence synthesis and why do we do them?

A single study alone is not the best way to inform a policy or programmatic decisions. A solitary study may only be relevant to the context in which it was undertaken (and should therefore be replicated in other settings to ensure findings are relevant), and, if conducted poorly, may present inaccurate or misleading information.

To better make a decision, policymakers and service providers require evidence that is contextualised across the entire knowledge base. This is where an evidence synthesis comes in.

An evidence synthesis allows researchers to identify and combine the findings of multiple studies that investigate the same research question. This pooled evidence may come from various different countries/settings and across different study designs, giving a fuller understanding of the topic than a single study can provide.

An evidence synthesis could be focused on the effectiveness or feasibility of an intervention, the prevalence of a condition or the relationship between two variables (for instance, poverty and disability). Findings of an evidence synthesis allow us to identify gaps in knowledge and provide comprehensive evidence on a given topic.

Key to all evidence syntheses is the use of rigorous and transparent methodology, to select and analyse the available studies. This methodology is often standardised and guided by established best-practice tools and resources, such as PRISMA guidelines for literature searching and selection. By using transparent, systematic methods, researchers can ensure consistency and replicability, and indeed minimise bias, so as to produce reliable results.

So, how do you actually conduct an evidence synthesis?

An evidence synthesis can take a variety of forms, with the most common of these being a systematic review. As the name suggests, researchers conducting a systematic review will systematically collect, appraise and synthesise evidence that fits pre-specified eligibility criteria. Multiple authors will review evidence deemed eligible for inclusion in the analysis to help ensure consistency and quality.

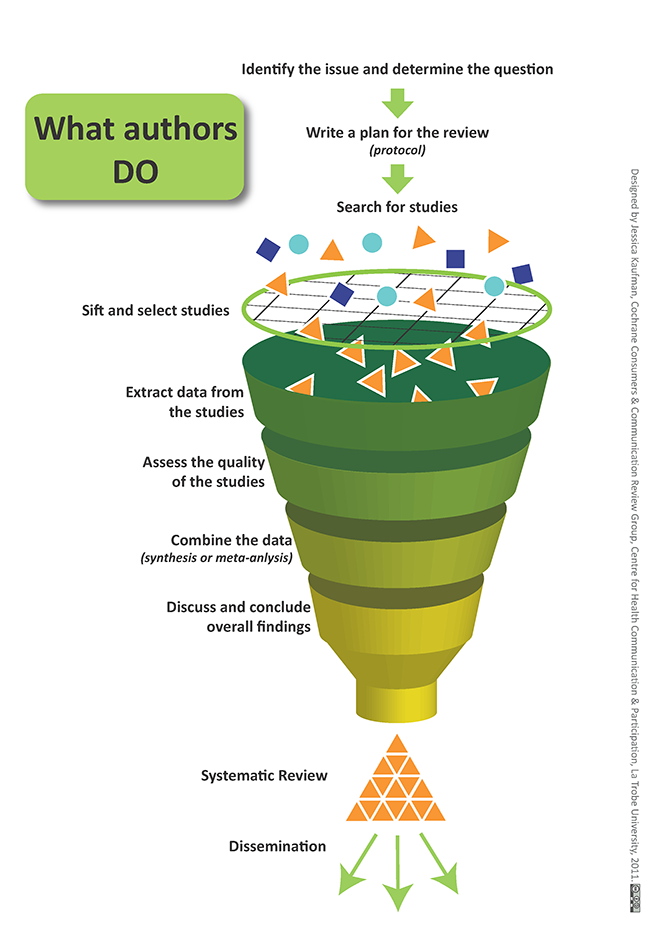

As seen in the graphic below, this process takes a research team from identifying the research question, through to dissemination of findings. The systematic methodology focuses on identifying evidence like published studies through a systematic search of bibliographic databases, from which individual studies meeting inclusion criteria are selected and relevant data is extracted. These data are synthesised to provide comprehensive conclusions and insight. As well as published papers, researchers may look to source additional data from grey literature, which are those materials and research that has not been published (or has been in a non-commercial form). Grey literature may include research reports, conference proceedings or policy briefs, which can be found by systematically searching mainstream search engines and specialised grey literature databases. Grey literature will play a larger role if peer-reviewed publications on the topic of interest are lacking.

In order to identify studies to collect together, researchers conduct a search across multiple online databases, using pre-defined search terms, relevant to the topic. For example, if interested in mental health, you may use search terms including ‘mental health’, ‘psychological disorder’, ‘psychosocial wellbeing’, ‘depression’, ‘anxiety’, etc. It is important to include as many synonyms for each search term as possible, to maximise your results. Preliminary test searches and team discussions can be used to identify the most relevant terms, as can the search terms from papers on a similar topic. Search strategies (often called a search string) can get very complicated, especially with most research investigating multiple areas of interest at once!

Records resulting from the search are evaluated by authors and a selection of relevant studies are chosen, based on the pre-defined eligibility criteria. This eligibility criteria may include the population characteristics (e.g. children), intervention of interest (e.g. cognitive behavioural therapy), outcome you wish to measure (e.g. depression), type of study (e.g. randomised controlled trial or non-randomised controlled study), or geographic location of the study (e.g. studies in sub-Saharan Africa).

The selection process is done by at least two researchers, to ensure no study of relevance is missed or incorrectly included. It is likely that the database search may produce thousands of results, which are eventually whittled down to just a handful of entries. It would be common for just 20 papers to be selected from say 4,000 results. The total results from your search will depend on the breadth of your research question, and the level of existing evidence published on the topic. Some reviews may have 1,000 initial results, others may have 20,000! Narrowing your research question and appropriately limiting your search string will help ensure a manageable number for your team.

Once selected, researchers will extract the relevant data from each study and appraise the methodological quality of each. This will help us understand the strength of the conclusions/recommendations.

The extracted information can be pooled together in a variety of ways, depending on what best suits the research question. Ultimately, this synthesised evidence should provide the evidence context and allow us to draw conclusions. For instance, that the intervention is effective when implemented in schools, but not hospitals. Or that the available evidence comes mainly from high-income settings, and more evidence is needed from low- and middle-income countries before a recommendation can be made.

Types of evidence synthesis

Although the most common, a systematic review is not the only method for evidence synthesis available. Other options include:

- Narrative reviews: In which more relaxed, non-standardised methodology may be adopted, to provide a general review of the literature. Ultimately, this method leads to a less comprehensive review.

- Rapid review: This method adopts systematic methods, but in the context of time constraints, where a decision is needed quickly. This may mean using a number of shortcuts (such as a simpler search strategy) which could mean some studies are missed.

- Scoping review: Similar in methods to a systematic review, but aims to provide a much broader overview of research evidence, as opposed to answering a very specific question. Searching for breadth, not depth. Often used if there is a limited evidence base existing for a topic, helping to provide a broad overview, with which to identify gaps in knowledge, define concepts in a field, and inform future research.

- Meta-analysis: This statistical technique is often conducted in conjunction with a systematic review, and allows a research to combine effect sizes across studies. This is particularly useful when looking at the effectiveness of an intervention.

- Umbrella review: Essentially a review of systematic reviews, this method often answers a broader question than a single systematic review would target. This is often a useful technique to help inform policy-makers. Take a look at Step 3.16 to learn about ICED’s use of umbrella reviews in developing an “evidence portal” on disability.

The type of evidence synthesis selected will be determined by the research question to be answered, the ultimate use of the evidence and the resources/capacity of the research team.

Examples of evidence synthesis in practice

To understand the use of evidence synthesis methods in practice, let’s take a look at an example conducted by the International Centre for Evidence in Disability. Poverty and disability in low- and middle-income countries: A systematic review

In this systematic review, ICED researchers identified 15,500 papers through a search of bibliographic databases. From these, 150 were included for analysis. Overall, 81% of these studies reported people with disabilities are more likely to be living in poverty. This trend was mostly consistent across impairment type, region and age groups.

This evidence, coming from 150 studies, is much more powerful than if we had looked at just one study in isolation. It has allowed us to interpret consistent findings from across different regions of the world, using different study designs, to say with confidence that people with disabilities are more likely to live in poverty.

This provides a rationale to policy-makers for the need of poverty alleviation programmes for this group, which would not have been as strong without the use of evidence synthesis techniques. As a result of this paper, ICED has now worked with NGOs and governments on a number of social-protection and poverty alleviation schemes, including research in the Maldives and Nepal.

Summary

Transparent, rigorous evidence syntheses are needed to make health care decisions. These established methods allow us to understand a topic in the context of the global knowledge base and provide comprehensive evidence with which to support people with disabilities.

Share this

Global Disability: Research and Evidence

Reach your personal and professional goals

Unlock access to hundreds of expert online courses and degrees from top universities and educators to gain accredited qualifications and professional CV-building certificates.

Join over 18 million learners to launch, switch or build upon your career, all at your own pace, across a wide range of topic areas.

Register to receive updates

-

Create an account to receive our newsletter, course recommendations and promotions.

Register for free