Home / Healthcare & Medicine / Medical Technology / Statistical Shape Modelling: Computing the Human Anatomy / Wrapping up: Week 4

This article is from the free online

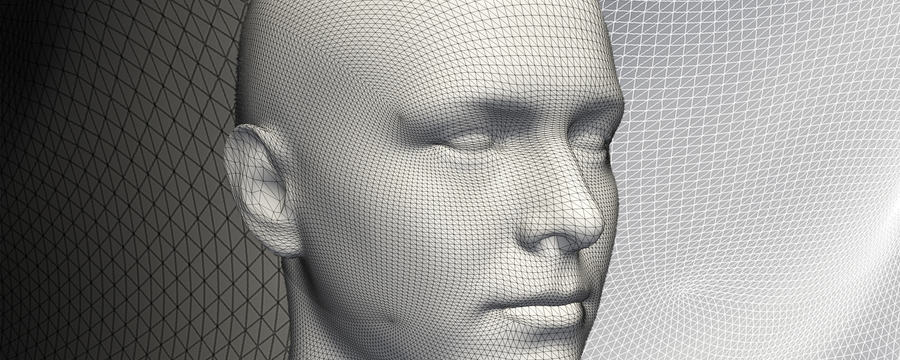

Statistical Shape Modelling: Computing the Human Anatomy

Reach your personal and professional goals

Unlock access to hundreds of expert online courses and degrees from top universities and educators to gain accredited qualifications and professional CV-building certificates.

Join over 18 million learners to launch, switch or build upon your career, all at your own pace, across a wide range of topic areas.